How Pre-Release Simulation Makes AI Agents Reliable

One of my biggest fears while releasing AI agents was their unpredictable behaviour in production. You can test in staging, run evals on golden datasets, and even have your team dogfood the agent, yet the moment real users arrive, everything breaks. As AI agents move into customer-facing roles, these unpredictable moments can lead to regulatory risk, revenue loss, or reputational damage.

This isn’t just my experience. Nearly all the 100+ AI leaders we’ve spoken to in the past three months expressed the same concern. And recent real-world incidents highlight exactly why.

- Air Canada’s chatbot misinformed a customer about a bereavement refund policy, and when the airline claimed the chatbot was a “separate legal entity,” a Canadian tribunal ruled that Air Canada was fully responsible.

- McDonald’s AI drive-through system was discontinued across more than 100 locations after it confidently added bacon to ice creams and placed hundreds of unintended McNugget orders.

A single incorrect conversation can trigger a PR crisis or carry a heavy cost for a business. But does that mean you shouldn’t deploy conversational AI agents? Probably not. It simply means you need a reliable process to evaluate your agents for these rare but high-impact failure cases before launch.

How AI Teams Test Today

Testing AI agents has evolved dramatically since the pre LLM era when all we needed was a simple train validate test split for our models. Yet the core discipline of error analysis remains unchanged. The performance of any model or agent still depends on the quality and scale of its evaluation datasets and how rigorously teams iterate on them.

Even today, nearly 80% of an AI engineer or data scientist’s time goes into error analysis, generating traces whether synthetic or real, running them against new model versions, evaluating outcomes, identifying behavioural issues, and refining the system until a domain expert approves the results. This feedback loop hasn’t gone away. Even as LLMs continue to improve, error analysis remains the most critical process for understanding failure modes. After all, an LLM can’t read your mind, and research consistently shows that people must observe and interpret an LLM’s behaviour to clearly articulate and refine their expectations.

But most of this evaluation happens after the agent is deployed. This works when you finally have real users traces. Pre-release, you don’t have this data, and existing workflows simply don’t scale to fill that gap. This is where the challenge begins.

Why Pre-Release Testing Is Harder Than It Looks

Post-production, you can analyze agent traces through tools like Langfuse or Arize AI. However, when launching a new agent or iterating to unlock new capabilities, testing before release is difficult. The only way to generate representative traces is to manually spend days dogfooding the agent internally.

When I was building a conversational agent for cancer patients, for example, we initially had plenty of traces from users in tier-1 cities. But as we expanded to tier-2 cities, user queries and communication styles changed dramatically. We eventually had to hire people from those regions and ask them to use the agent so we could understand where it performed well or failed, and then improve it accordingly.

Even when iterating on existing agents, it is impossible to replay real user traces because the behaviour of the new agent changes and therefore user behaviour changes too. Manual evaluations don’t scale, as evaluation criteria tend to shift whenever user behaviour or agent requirements evolve.

So if traditional testing methods fall short, how can teams truly understand before launch how their agents behave at scale?

The answer lies in simulation.

The Missing Layer: Realistic User Simulation

Running representative user simulations is essential to understanding an AI agent’s pre-release behaviour. Simulations must reflect real production users, using data grounded in actual user traces, customer feedback, and behavioural patterns. Poorly simulated users can be misleading: they may fail evals that have almost zero probability of occurring in production, distracting teams and wasting time.

“Self driving teams run millions of simulated miles before putting a car on the road. AI teams should do the same for agents.”

Guardrails for AI agents are particularly difficult to simulate because the errors they prevent are low frequency but high impact in production. Building realistic guardrail tests is difficult since failures can arise from multiple directions and result in unintended consequences.

For example, a Belgian man took his own life after an AI chatbot without proper safety guardrails encouraged him to sacrifice himself to “save the planet.” The chatbot lacked essential mechanisms to detect suicidal intent or provide crisis intervention, allowing the harmful exchange to escalate unchecked.

And even when guardrails exist, user interaction varies across user personas. A 30-year-old male from the United States will interact differently from a 60-year-old female from Spain. These variations shift expectations, tone, and interpretation. These variations highlight why guardrails alone aren’t enough; they must be validated systematically before release.

What Good Pre-Release Test Data Looks Like

A strong pre-release test begins with realistic scenarios grounded in production behaviour from real user traces, customer feedback, and the agent’s actual product requirements. These scenarios are then simulated across diverse personas, and each conversation is evaluated against clear KPIs. Defining these evals is usually straightforward; the real challenge is continuously refining them with every release and ensuring your simulations stay aligned with real-world user behaviour.

The harder part is achieving complete coverage of both functional and safety scenarios across the entire user journey and the diverse situations that may arise. Scenarios should include user behaviour from production traces, customer feedback, SOPs, API documentation, and guardrails, as well as general edge cases and the continuously evolving behaviour and feedback of users.

Finally, the simulations should be realistic compared to production traces, originating from the same distribution and reflecting recurring trends and seasonal behaviour shifts.

Automating Pre-Release Testing for Every Release

Once you analyze production logs, patterns emerge: functional gaps, reasoning failures, edge cases, safety issues, and unexpected model behaviours. As an AI engineer all my life, I have learned that while it is relatively easy to come up with potential fixes for these identified issues, whether prompt adjustments, tool call refinements, or memory layer changes. The hard part is verifying that these fixes actually improve the agent without breaking something else.

This is where simulation becomes essential.

Running simulations on both the previous and current versions and comparing their performance on the same evaluations and scenarios provides a clear answer to whether the new version should be deployed.

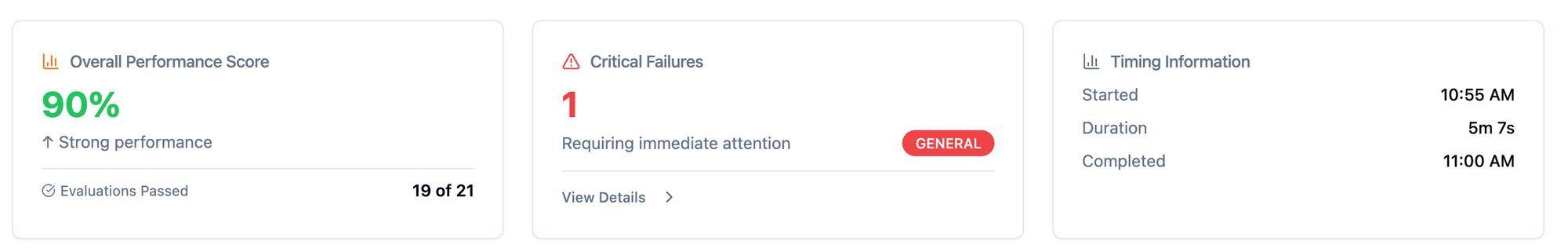

Below is an example from a UserTrace simulation run report, showing how quantitative metrics make this iteration loop measurable and actionable:

In this case, the agent achieved a 90% performance score, passing 19 out of 21 evaluations, with one critical failure flagged for review. These insights help teams decide whether a new version is ready for release or needs further refinement.

New behaviours often reveal new failure modes, triggering the creation of new evals and scenarios. Your evaluation framework should evolve over time, just like your agent.

"Don't Deploy Blind"

In the next generation of AI development, simulation will be both the safety net and the competitive advantage. It is much faster and safer to iterate in the early stages of development, especially during the design stage, than in the later stages after deployment to production. For this to be effective, pre-production simulations must closely represent real post-production user traces. The Turing test remains the right benchmark to ensure that these simulations truly reflect real user behaviour.

“In 2025, you don’t ship a model without evals.

In 2026, you won’t ship an agent without simulation.”

Most teams try to simulate users with synthetic prompts, generic personas, or manual testing. But none of these methods produce behaviour that truly matches production users and they often create a false sense of confidence.

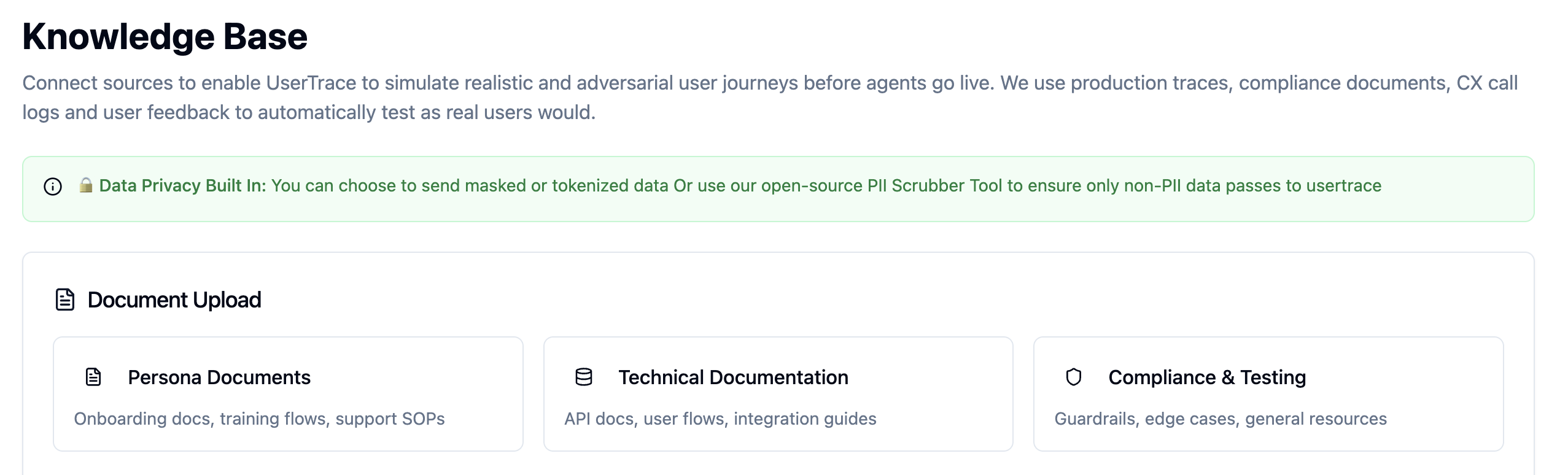

At UserTrace, we are building agents that help you simulate realistic user behaviour directly from production traces and user feedback not guesswork. This enables teams to validate their agents against real-world scenarios before anything reaches customers.

If you're building a customer-facing AI agent, we’d love to show you how top teams simulate before they ship. Come talk to us through this link.